Understanding issue with automatic buffer swapping

|

Hi,

I've been using JOGL for a while and things have been working as expected. However, I recently observed an issue that I eventually tracked down to automatic buffer swapping, but that based on the javadocs, should not have occurred as far as I understand. This is part of the setup code:

final GLCapabilities caps = new GLCapabilities(GLProfile.get(GLProfile.GL4));

caps.setBackgroundOpaque(true);

caps.setDoubleBuffered(true);

caps.setRedBits(8);

caps.setGreenBits(8);

caps.setBlueBits(8);

caps.setAlphaBits(8);

canvas = new GLCanvas(caps);

canvas.addGLEventListener(this);

canvas.setAutoSwapBufferMode(false); // <-- note this one

According to the docs, when auto buffer swapping is disabled, it's the client's responsibility to manually invoke the swapBuffers() method, which my application did after the scene had been rendered into a viewport from the camera's PoV.

canvas.swapBuffers();

The expected result was that the scene would be rendered, but the actual result was that the entire screen was black. Not only that, but this problem is only present when the application runs on my desktop, using NVIDIA GTX-770 w/ driver 375.26 on Kubuntu 16.10 x64. The problem has never been observed on my laptop, using NVIDIA GTX-960M w/ driver 367.57. I tried several drivers on the desktop, including one that was known to work before I started updating drivers to see if the problem would go away; can't remember which version, but it was older than 367 on the laptop. I've worked around the problem by eliminating manual buffer-swapping from the client (i.e. left the auto-buffer swapping behavior enabled by default and removed the manual call to swapBuffers). I'd appreciate if someone could shed some light on whether there's really a JOGL issue here somewhere, or whether you think I my understanding of the javadoc was incorrect. Thanks in advance, -r --- PS: If interested, I chose to swap buffers manually because a scene may have several cameras with each one drawing into a different viewport and I wanted to make sure the buffers would be swapped only once, after every camera had finished rendering from its own PoV, rather than it getting swapped automatically for each camera in a single pass. (Please let me know if this is not really a good way to go about this.) |

Re: Understanding issue with automatic buffer swapping

I doubt the issue is related to how you initialise your application. Since the code work on some configurations and issue only appear on some system configurations makes me expect the issue is related to how opengl states are manipulated at runtime and life-cycle of data stored on the GPU. If you can provide a link to your full application preferably stored in a git tree then the issue can be studied more in detail if your code have data life-cycle or race issues. JogAmp jogl expected behaviour is documented in the jogl junit tests, if your code follow the same coding practise as the junit tests then we know the code will work reliably on many system configurations. |

|

Administrator

|

In reply to this post by xghost

I agree with Xerxes. Moreover, JogAmp's Ardor3D Continuation heavily relies on manual buffer swapping and it works like a charm but you shouldn't swap buffers anywhere.

Finally, JOGL handles a lot of aspects for you without preventing you from doing them your own way. It's easy to "forget" treating some corner cases when you have to mimic some JOGL behaviours in your own code.

Julien Gouesse | Personal blog | Website

|

|

This post was updated on .

In reply to this post by Xerxes Rånby

That's my thought. I still think this problem could also be a card- or driver-specific issue, but there's not much I think I could do if that turns out to be the case. Trying to rule out any potential JOGL-related issue (i.e. either internal or in how I've used it) seemed like an easier first step. At the time of this writing, the git tree can be found here: https://gitlab.com/ghost-in-the-zsh/sage2/ The relevant branches and files and commits are as follows: 1. The commits where the issue got introduced are: 49e879f5263ee1d15a479cbd51b9ea068629f8e0 and d6b0c67adac1fe4a7c9ec22ef869d71171df66e1 respectively. 2. Commit/diff with workaround: 55bcea2efd78fb2272aaffea161a1e5c4f6d2690 Since most development takes place in the laptop, I didn't observe the issue until I tried running the tests on my desktop several months later, and got it "fixed" yesterday before posting. The relevant files are: 1. The GL4RenderSystem implements the GLEventListener and contains the GLCanvas setup I had quoted before. These are the commits before and after the workaround. 2. The GenericSceneManager was invoking RenderSystem.swapBuffers(), which internally invoked canvas.swapBuffers() as shown above. These are the commits before and after the workaround Although you might not need to go this far, please note that if you actually intend to run test cases from the tests package, you should make sure to check out the feature/final-updates-and-documentation branch of the project and also add this separate math library to your class path. In hindsight, I did wonder about this based on some odd results I saw. If looking at a commit without the workaround, the tests.ray.sage2.scene.MultiViewportTest does display a scene, but the lower-right camera/viewport does not show its content (the test has 4 cameras looking at the same scene from different angles) while the other 3 cameras seem to display the scene correctly. I remember the thought of a race condition crossing my mind, since the GLEventListener runs on a separate thread, which could allow the scene manager to continue and end up asking the render system canvas to swap the buffers before the last camera had a chance to finish. OTOH, it didn't seem to make sense that other tests with a single camera for the scene (e.g. NodeBasedCameraTest) would display a completely black screen. EDIT: I should note that depending on which buffer swapping mode and manual buffer swapping invocation combination you use, you could actually see enough through the severe flickering to realize that the scene had been rendered, even if it was not being displayed properly. I've not read the JOGL unit tests; I was relying on the javadocs. If, after looking at the code above, you have a more specific suggestion, I'll appreciate it. What about the reason I provided for wanting to do it? Is it incorrect to think that, if I have different cameras rendering into different viewports in the same window, JOGL will swap buffers for each camera per frame rather than just once per frame (i.e. only after all cameras have finished rendering)? More concretely, if I have 4 cameras, I don't want 4 buffer swaps; rather, I want only 1 buffer swap after all 4 cameras have rendered into their viewports. While I've heard of the existence of Ardor3D Continuation before, I'm quite time constrained and don't really have the time to search through non-trivial and unfamiliar code bases aimlessly just hoping to discover something, so if you have a specific suggestion on what the right way to do this is, I'd appreciate it. I understand that, but I would've at least expected the issue to have also been observed in my laptop if I had just not done something I should have. That never happened. Not saying I'm dismissing the possibility; just saying that the evidence at this time seems to suggest something different. Thanks, -r |

Re: Understanding issue with automatic buffer swapping

I am able to clone jglm however there is some permission that prevents non-project members to look at or clone sage2. |

I've updated permissions to match jglm's. I think you should be able to access it now. Sorry for the hiccup. Let me know if you still have issues accessing it. Thanks, -r |

Re: Understanding issue with automatic buffer swapping

I can clone the repository now, thank you, I will test and investigate using my opengl 4.5 systems. git clone https://gitlab.com/ghost-in-the-zsh/sage2 git clone https://gitlab.com/ghost-in-the-zsh/jglm dependencies: testng http://testng.org/doc/index.html jogamp https://jogamp.org |

Re: Understanding issue with automatic buffer swapping

|

This post was updated on .

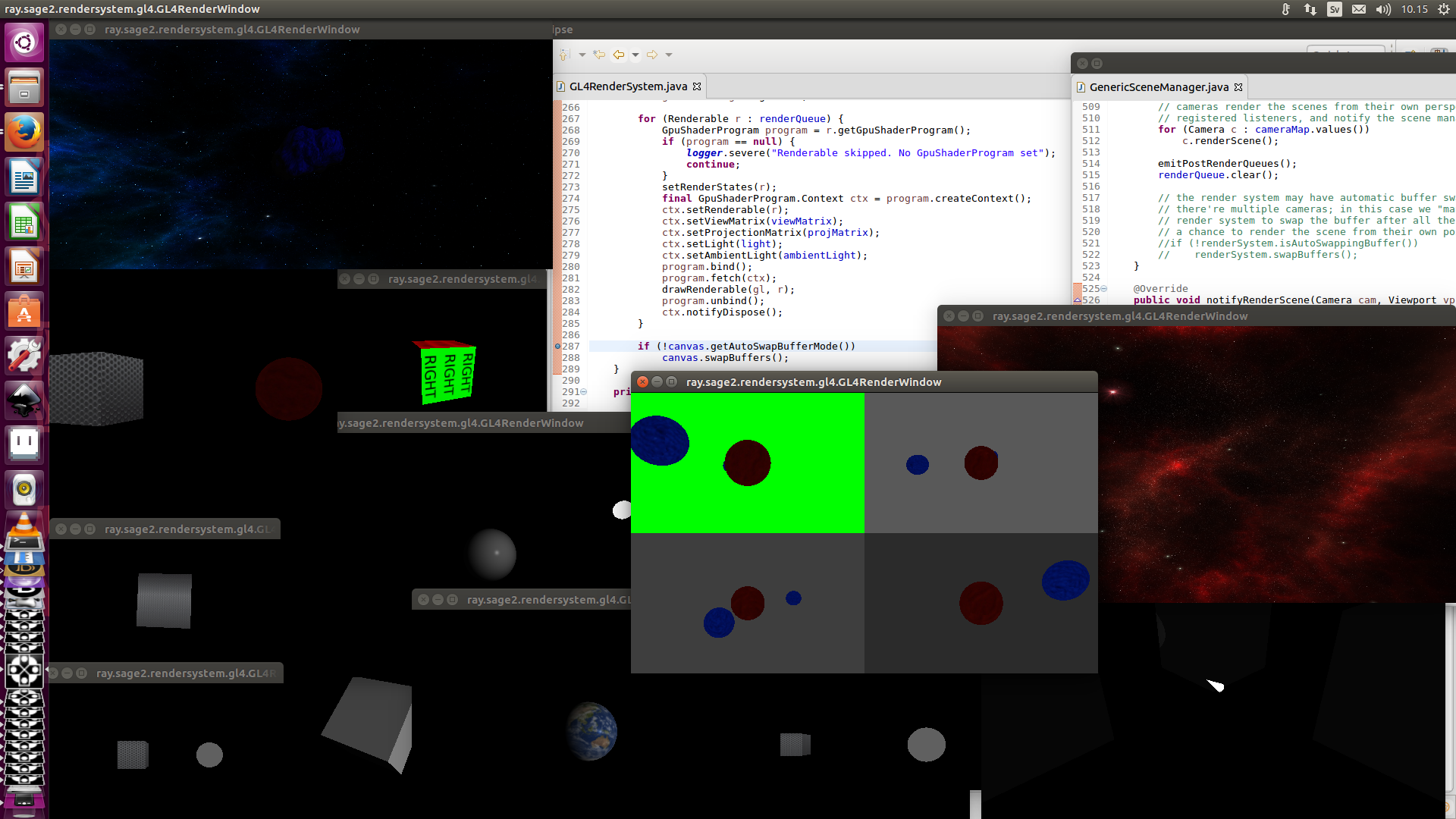

In reply to this post by xghost

You want to invert the logic on line #L287 of GL4RenderSystem and use

if (!canvas.getAutoSwapBufferMode()) so that your buffers will get swapped when you have disabled automatic buffer swapping. https://gitlab.com/ghost-in-the-zsh/sage2/blob/master/src/ray/sage2/rendersystem/gl4/GL4RenderSystem.java#L287 After fixing that you can remove all the workaround swap logic from GenericSceneManager Remove lines 517-522. https://gitlab.com/ghost-in-the-zsh/sage2/blob/master/src/ray/sage2/scene/generic/GenericSceneManager.java#L517-522 Cheers Xerxes |

Re: Understanding issue with automatic buffer swapping

|

This post was updated on .

The sage2 tests are running fine, without automatic buffer swapping, on my desktop GTX system

GL_VENDOR NVIDIA Corporation GL_RENDERER GeForce GTX 580/PCIe/SSE2 GL_VERSION 4.5.0 NVIDIA 367.57 with the fix in place. I will test on some more systems since my driver is about the same as on the system where you reported no errors.

|

Re: Understanding issue with automatic buffer swapping

|

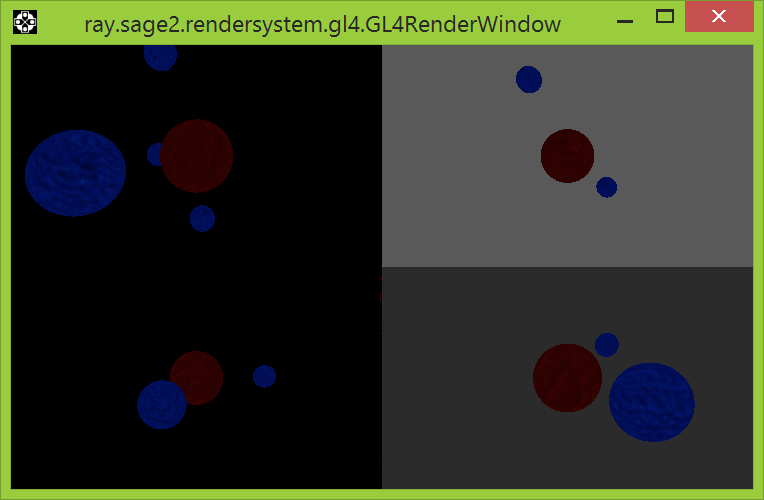

I am encountering some flicker and one buffer that is not cleared / updating properly

when running the sage2 test on Windows 8 in combination with the Azul JVM and AWT implementation. Platform: Java Version: 1.8.0_121 (1.8.0u121), VM: OpenJDK 64-Bit Server VM, Run time: OpenJDK Runtime Environment Platform: Java Vendor: Azul Systems, Inc., http://www.azulsystems.com/, JavaSE: true, Java6: true, AWT enabled: true Swap Interval 1 GL Profile GLProfile[GL4bc/GL4bc.hw] GL Version 4.5 (Compat profile, arb, compat[ES2, ES3, ES31], FBO, hardware) - 4.5.13399 Compatibility Profile Context 15.200.1062.1004 [GL 4.5.0, vendor 15. 200.1062 (Compatibility Profile Context 15.200.1062.1004)] Quirks [NoDoubleBufferedBitmap, NoSurfacelessCtx] Impl. class jogamp.opengl.gl4.GL4bcImpl GL_VENDOR ATI Technologies Inc. GL_RENDERER AMD Radeon(TM) R5 Graphics GL_VERSION 4.5.13399 Compatibility Profile Context 15.200.1062.1004 GLSL true, has-compiler-func: true, version: 4.40 / 4.40.0 GL FBO: basic true, full true GL_EXTENSIONS 285 GLX_EXTENSIONS 24 As can be seen in this image: the top left quadrant have rendered two extra blue moons most likely originating from one nu-cleared buffer.  It is possible that the issues seen on this system is caused by different buffering in the Azul AWT implementation compared to the Linux implementation. You can remove all AWT differences and AWT issues by switching from AWT to using the JogAmp JOGL GLWindow. GLWindow is highly recommended since it is supported on platforms that do not have AWT. com.jogamp.newt.opengl.GLWindow |

|

In reply to this post by Xerxes Rånby

Hi Xerxes,

First of all, thanks for your time and help. Just to double-check, please make sure you're looking at the branch with the latest content (git checkout -b latest origin/feature/final-updates-and-documentation). The workaround commit I linked in the previous post is there (not master) and does not have the lines. I apologize for not making this clearer before. (Just basing that on the URL you posted.) https://gitlab.com/ghost-in-the-zsh/sage2/blob/feature/final-updates-and-documentation/src/ray/sage2/scene/generic/GenericSceneManager.java#L516 https://gitlab.com/ghost-in-the-zsh/sage2/blob/feature/final-updates-and-documentation/src/ray/sage2/rendersystem/gl4/GL4RenderSystem.java#L230 I went back to the master branch you seem to have looked at (which disables auto swapping), tested the following based on your suggestions (slightly different): // Commented out the manual swapping logic on the GL4RenderSystem file to let the SceneManager do it // if (canvas.getAutoSwapBufferMode()) // canvas.swapBuffers(); // On the GenericSceneManager file, removed the if-check, so that swapBuffers() is always invoked renderSystem.swapBuffers(); I can still observe the problem on my desktop, even though it should always be swapping the buffers like this. It's as if the following were not really swapping the buffers(?) // https://gitlab.com/ghost-in-the-zsh/sage2/blob/master/src/ray/sage2/rendersystem/gl4/GL4RenderSystem.java#L214-217 @Override public void swapBuffers() { canvas.swapBuffers(); } Wouldn't it be "preferable" to call swapBuffers only once after all cameras have finished with their viewports (i.e. once per frame) instead of several times? Is trying to call canvas.swapBuffers() outside the GLEventListener thread incorrect usage? Just for checks, I had experimented with grabbing a context to see if it was that (pseudo-ish), but IIRC, that caused a recursive lock issue with JOGL: GLContext ctx = canvas.getContext(); ctx.makeCurrent(); canvas.swapBuffers(); ctx.release(); I figured I was trying using it incorrectly at on that and had returned it back to a simple canvas.swapBuffers(), though the Javadoc didn't seem to indicate it might be a problem. Thanks again. Much appreciated. -r EDIT: Your last post came in as I was writing this one. I'll get to it in a separate reply as needed. |

|

In reply to this post by Xerxes Rånby

That one I had not seen before. I'll run the tests later today in Windows 7/10 machines to see if I notice any difference. (I had run the tests in Windows 7 some months ago and things were fine at the time. I should be able to update later today.) The Linux Desktop java -version is: openjdk version "1.8.0_121" OpenJDK Runtime Environment (build 1.8.0_121-8u121-b13-0ubuntu1.16.10.2-b13) OpenJDK 64-Bit Server VM (build 25.121-b13, mixed mode) The Linux Laptop's version is the same as the Desktop's: openjdk version "1.8.0_121" OpenJDK Runtime Environment (build 1.8.0_121-8u121-b13-0ubuntu1.16.10.2-b13) OpenJDK 64-Bit Server VM (build 25.121-b13, mixed mode) I'll take a look. Thanks again, -r |

Re: Understanding issue with automatic buffer swapping

|

In reply to this post by Xerxes Rånby

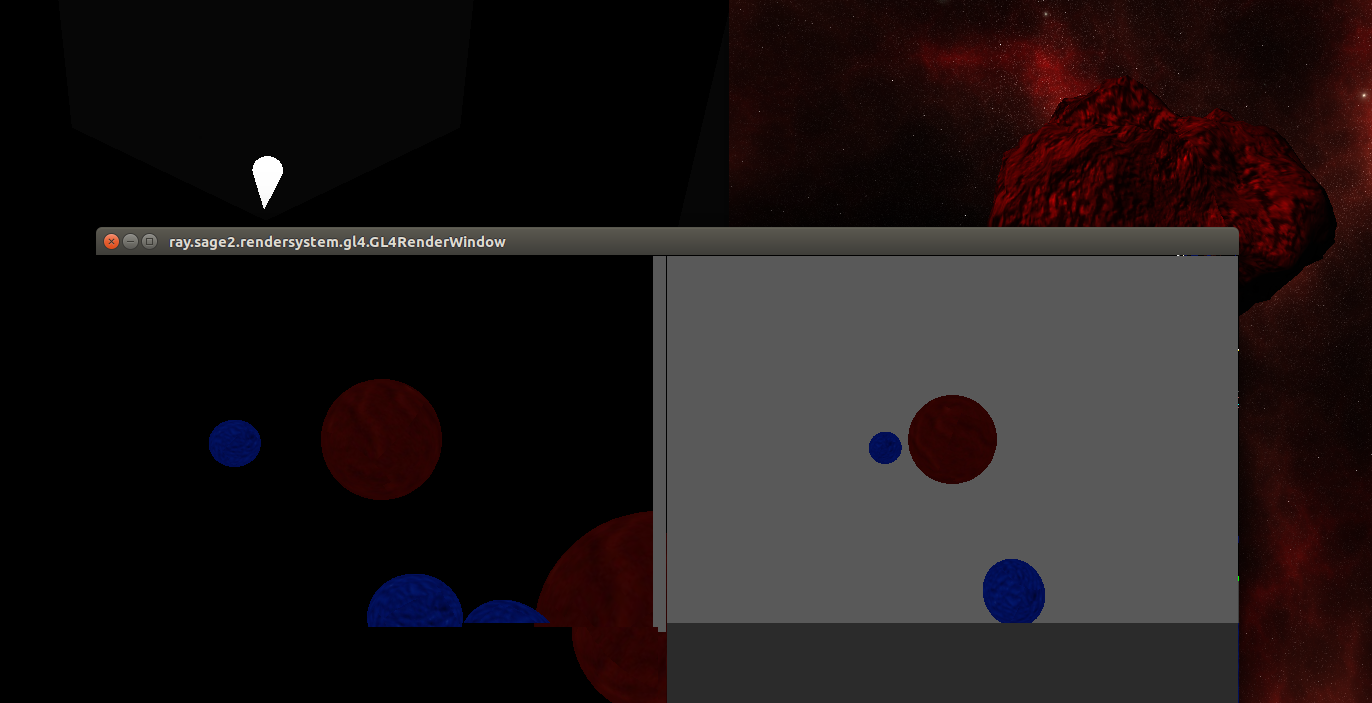

I see the same issue that every second frame is using one un-cleared buffer when running the MultiViewportTest (AWT)

using Ubuntu 16.10 + AZUL 8 121 + OpenGL 4.5 compatible Mesa drivers for AMD AsteroidSkyBoxTest and SpotLightTest is rendering fine using this setup, as well on the windows setup. It is only the MultiViewportTest that is causing rendering on top of un-cleared buffer issues.  I am using this repository that contain Mesa 17 with OpenGL 4.5 support for AMD APU: https://launchpad.net/~oibaf/+archive/ubuntu/graphics-drivers Platform: Java Version: 1.8.0_121 (1.8.0u121), VM: OpenJDK 64-Bit Server VM, Runtime: OpenJDK Runtime Environment Platform: Java Vendor: Azul Systems, Inc., http://www.azulsystems.com/, JavaSE: true, Java6: true, AWT enabled: true X11GraphicsDevice[type .x11, connection :0]: Natives GL4bc false GL4 true [4.5 (Core profile, arb, compat[ES2, ES3, ES31, ES32], FBO, hardware)] GLES3 true [3.1 (ES profile, arb, compat[ES2, ES3, ES31], FBO, hardware)] GL3bc false GL3 true [4.5 (Core profile, arb, compat[ES2, ES3, ES31, ES32], FBO, hardware)] GL2 true [3.0 (Compat profile, arb, compat[ES2], FBO, hardware)] GLES2 true [3.1 (ES profile, arb, compat[ES2, ES3, ES31], FBO, hardware)] GLES1 true [1.1 (ES profile, arb, compat[FP32], hardware)] Count 6 / 8 Common GL4ES3 true GL2GL3 true GL2ES2 true GL2ES1 true Mappings GLES1 GLProfile[GLES1/GLES1.hw] GLES2 GLProfile[GLES2/GLES3.hw] GL2ES1 GLProfile[GL2ES1/GL2.hw] GL4ES3 GLProfile[GL4ES3/GL4.hw] GL2ES2 GLProfile[GL2ES2/GL4.hw] GL2 GLProfile[GL2/GL2.hw] GLES3 GLProfile[GLES3/GLES3.hw] GL4 GLProfile[GL4/GL4.hw] GL3 GLProfile[GL3/GL4.hw] GL2GL3 GLProfile[GL2GL3/GL4.hw] default GLProfile[GL2/GL2.hw] Count 10 / 12 Swap Interval 1 GL Profile GLProfile[GL2/GL2.hw] GL Version 3.0 (Compat profile, arb, compat[ES2], FBO, hardware) - 3.0 Mesa 17.1.0-devel [GL 3.0.0, vendor 17.1.0 (Mesa 17.1.0-devel)] Quirks [NoDoubleBufferedPBuffer, NoSetSwapIntervalPostRetarget] Impl. class jogamp.opengl.gl4.GL4bcImpl GL_VENDOR X.Org GL_RENDERER Gallium 0.4 on AMD MULLINS (DRM 2.46.0 / 4.8.0-39-generic, LLVM 4.0.0) GL_VERSION 3.0 Mesa 17.1.0-devel GLSL true, has-compiler-func: true, version: 1.30 / 1.30.0 GL FBO: basic true, full true GL_EXTENSIONS 241 GLX_EXTENSIONS 30 |

Re: Understanding issue with automatic buffer swapping

When you do manual buffer swapping I recommend looking into how to correctly handle framebuffers. * make sure that you glClear to clear the buffers before rendering // clear depth/stencil/color contents * make sure that you call glInvalidateFramebuffer after rendering before swapbuffers to improve performance and to prevent dirty buffers to presistent before the next frame // avoid storing the depth/stencil contents I expect the artifacts seen on some of my devices are caused by missing calls for both. https://community.arm.com/graphics/b/blog/posts/mali-performance-2-how-to-correctly-handle-framebuffers |

|

This post was updated on .

I was finally able to test a bit on my Windows 7 x64 environment, with the same 2GB GTX-770 card on driver 373.06.

The MultiViewportTest shows severe flickering when JOGL's default auto-swapping is enabled. If I revert my original workaround and go back to manual swapping, then the test looks correct (i.e. the severe flickering is gone). My guess is that it's related to my previous question regarding how to properly render to multiple viewports on a single frame/pass, and that JOGL's auto-buffer swapping is swapping 4 times per "frame". I'd still like to hear comments on the proper way to work on the multiple viewports. Currently, the contents of each viewport are rendered on separate calls to canvas.display() -i.e. if there're 4 viewports on a window, then 4 display(GLAutoDrawable) invocations will be made to draw only to the viewport's section (see later). In both cases (auto/manual swapping), I've had calls to glClearBufferfv(...) for GL_COLOR and GL_DEPTH from very early in development. See GL4RenderSystem.java:194-195 I was not familiar with glFramebuffer/glInvalidateFramebuffer, so I'll look into it in more detail soon. Read the article. While I still need to process some of the framebuffer-related stuff and check more references (OpenGL SB6, though I've been burned by errors there before...), there's something that caught my attention: the article recommends that you should always clear all the buffers -i.e. call glClear(/*color | depth | stencil | ...*/). As mentioned at first, my window can have multiple viewports, each with its own clear color, etc. If I just call glClear at the beginning of the frame, instead of trying to use a stencil to clear only a section of the window (i.e. the viewport) then, when multiple viewports are used, the entire thing gets messed up. From what I understood, the article says that trying to clear only a section of the framebuffer with a stencil (i.e. what I've been doing) is a common mistake. So, if someone can offer recommendations regarding how to properly handle multiple viewports in the same window, that'd be helpful. Thanks in advance, -r PS: I hope to get to test in Windows 10 soon, depending on whether it's still relevant. |

|

While not directly related to your viewport question this is how I "sidestep" the issue. I render each camera to a texture and then I composite the textures into a single texture that is finally the one I render to screen. In this way I can composite the camera views on top of each others et c.

|

|

I'll probably have to wait until later during summer/fall before I can take a closer detailed look at the issue and work to solve it. For the time being, I'm documenting the problems in the issue tracker.

Thanks for all of your suggestions and help. -r PS: Please note that previous URLs are dead. The current URL is https://gitlab.com/ghost-in-the-zsh/rage |

«

Return to jogl

|

1 view|%1 views

| Free forum by Nabble | Edit this page |